Words

an excerpt from my book manuscript

Like most people, my day to day experience consists of a continual, self-conscious cavalcade of self-criticism. Everything about myself that comes before my mind, everything that appears on the spotlighted stage at the Cartesian Theatre, is made to do a pirouette and then is bathed in boos and hisses.

Published Essays

in other words, more words

An excerpted chapter from my memoir manuscript titled “Halfway Twice Is Not Yet Once”

at SLAB literary journal (click then scroll up a tad)

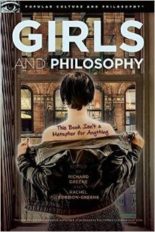

How Not To Watch Girls

at Amazon